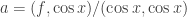

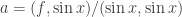

Exercise 3.4.21. Given the function  on the interval

on the interval  , what is the closest function

, what is the closest function  to

to  ? What is the closest line

? What is the closest line  to

to  ?

?

Answer: To find the closest function  to the function

to the function  we first project

we first project  onto the function

onto the function  on the given interval to obtain

on the given interval to obtain  , and then project

, and then project  onto

onto  to obtain

to obtain  .

.

We project  onto

onto  by taking the dot product of

by taking the dot product of  with

with  and then normalizing by dividing by the dot product of

and then normalizing by dividing by the dot product of  with itself:

with itself:

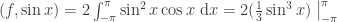

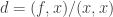

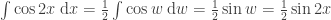

The numerator is

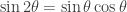

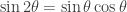

where we used the trigonometric identity  .

.

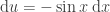

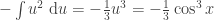

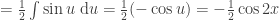

To integrate we substitute the variable  so that

so that  . We then have

. We then have

We then have

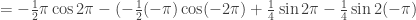

![= -\frac{2}{3} \cos^3 \pi - [-\frac{2}{3} \cos^3 (-\pi)] = -\frac{2}{3} (-1)^3 - [-\frac{2}{3} (-1)^3]](https://s0.wp.com/latex.php?latex=%3D+-%5Cfrac%7B2%7D%7B3%7D+%5Ccos%5E3+%5Cpi+-+%5B-%5Cfrac%7B2%7D%7B3%7D+%5Ccos%5E3+%28-%5Cpi%29%5D+%3D+-%5Cfrac%7B2%7D%7B3%7D+%28-1%29%5E3+-+%5B-%5Cfrac%7B2%7D%7B3%7D+%28-1%29%5E3%5D&bg=ffffff&fg=333333&s=0&c=20201002)

![= -\frac{2}{3} \cdot (-1) - [-\frac{2}{3} \cdot (-1)] = \frac{2}{3} - \frac{2}{3} = 0](https://s0.wp.com/latex.php?latex=%3D+-%5Cfrac%7B2%7D%7B3%7D+%5Ccdot+%28-1%29+-+%5B-%5Cfrac%7B2%7D%7B3%7D+%5Ccdot+%28-1%29%5D+%3D+%5Cfrac%7B2%7D%7B3%7D+-+%5Cfrac%7B2%7D%7B3%7D+%3D+0&bg=ffffff&fg=333333&s=0&c=20201002)

Since the numerator in the expression for  is zero, we have

is zero, we have  .

.

(Note that we do not need to calculate the denominator in the expression for  . We know it must be positive, and thus the quotient is defined. See below for a sketch of a proof of this.)

. We know it must be positive, and thus the quotient is defined. See below for a sketch of a proof of this.)

We next project  onto

onto  by taking the dot product of

by taking the dot product of  with

with  and then normalizing by dividing by the dot product of

and then normalizing by dividing by the dot product of  with itself:

with itself:

The numerator is

where we used the trigonometric identity  .

.

To integrate we substitute the variable  so that

so that  . We then have

. We then have

We then have

Since the numerator in the expression for  is zero, we have

is zero, we have  . (Again, we are guaranteed that the denominator is positive and the quotient defined.)

. (Again, we are guaranteed that the denominator is positive and the quotient defined.)

So the closest function  to

to  is

is  .

.

To find the closest function  to the function

to the function  we first project

we first project  onto the constant function with the value 1 on the given interval to obtain

onto the constant function with the value 1 on the given interval to obtain  , and then project

, and then project  onto the function

onto the function  to obtain

to obtain  .

.

We project  onto the constant function with value 1 by taking the dot product of

onto the constant function with value 1 by taking the dot product of  with 1 and then normalizing by dividing by the dot product of 1 with itself:

with 1 and then normalizing by dividing by the dot product of 1 with itself:

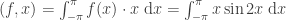

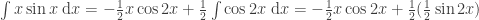

The numerator is

To integrate we substitute the variable  so that

so that  . We then have

. We then have

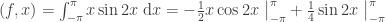

We then have

Since the numerator in the expression for  is zero we have

is zero we have  . (Recall that the denominator is guaranteed to be positive.)

. (Recall that the denominator is guaranteed to be positive.)

We project  onto the function

onto the function  by taking the dot product of

by taking the dot product of  with

with  and then normalizing by dividing by the dot product of

and then normalizing by dividing by the dot product of  with itself:

with itself:

The numerator is

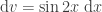

To integrate this we use integration by parts, taking advantage of the formula  . (The following is adapted from a post on socratic.org.) We let

. (The following is adapted from a post on socratic.org.) We let  and

and  . Then

. Then  is simply

is simply  , and

, and  (the integrand of

(the integrand of  , as discussed above).

, as discussed above).

We then have

The second integral we can evaluate by substituting  and

and  so that

so that

Substituting for the second integral above we then have

We then have

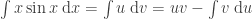

The denominator in the expression for  is

is

We then have

The straight line  closest to the function

closest to the function  is thus the line

is thus the line  .

.

ADDENDUM: Suppose that  is a continuous function defined on the interval

is a continuous function defined on the interval ![[a, b]](https://s0.wp.com/latex.php?latex=%5Ba%2C+b%5D&bg=ffffff&fg=333333&s=0&c=20201002) and

and  for some

for some  . Then we want to show that the inner product

. Then we want to show that the inner product  .

.

The basic idea of the proof is as follows: The function  is always nonnegative, and thus its integral over the interval

is always nonnegative, and thus its integral over the interval ![[a, b]](https://s0.wp.com/latex.php?latex=%5Ba%2C+b%5D&bg=ffffff&fg=333333&s=0&c=20201002) is nonnegative as well. If

is nonnegative as well. If  is nonzero for some

is nonzero for some  then since

then since  is continuous

is continuous  will also be nonzero for some interval

will also be nonzero for some interval ![[c, d]](https://s0.wp.com/latex.php?latex=%5Bc%2C+d%5D&bg=ffffff&fg=333333&s=0&c=20201002) that includes

that includes  , with

, with  . This implies that the integral of

. This implies that the integral of  over that subinterval

over that subinterval ![[c, d]](https://s0.wp.com/latex.php?latex=%5Bc%2C+d%5D&bg=ffffff&fg=333333&s=0&c=20201002) will be positive.

will be positive.

But we also have  since

since  and

and ![[c, d]](https://s0.wp.com/latex.php?latex=%5Bc%2C+d%5D&bg=ffffff&fg=333333&s=0&c=20201002) is contained within

is contained within ![[a, b]](https://s0.wp.com/latex.php?latex=%5Ba%2C+b%5D&bg=ffffff&fg=333333&s=0&c=20201002) . So if

. So if  then we also have

then we also have  and the inner product

and the inner product  is positive.

is positive.

NOTE: This continues a series of posts containing worked out exercises from the (out of print) book Linear Algebra and Its Applications, Third Edition by Gilbert Strang.

by Gilbert Strang.

If you find these posts useful I encourage you to also check out the more current Linear Algebra and Its Applications, Fourth Edition , Dr Strang’s introductory textbook Introduction to Linear Algebra, Fifth Edition

, Dr Strang’s introductory textbook Introduction to Linear Algebra, Fifth Edition and the accompanying free online course, and Dr Strang’s other books

and the accompanying free online course, and Dr Strang’s other books .

.

is computed as

. Verify that

is orthogonal to both

and

.

and

we have

and

are scalars and

and

are orthonormal we then have

is orthogonal to

.

and

we have

is also orthogonal to

.

by Gilbert Strang.

, Dr Strang’s introductory textbook Introduction to Linear Algebra, Fifth Edition

and the accompanying free online course, and Dr Strang’s other books

.