I recently read the (excellent) online resource Quantum Computing for the Very Curious by Andy Matuschak and Michael Nielsen. Upon reading the proof that all length-preserving matrices are unitary and trying it out myself, I came to believe that there is an error in the proof as written, specifically with trying to show that off-diagonal entries in  are zero if

are zero if  is length-preserving.

is length-preserving.

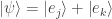

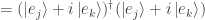

Using the identity  , a suitable choice of

, a suitable choice of  with

with  , and the fact that

, and the fact that  is length-preserving, Nielsen first shows that

is length-preserving, Nielsen first shows that  for

for  .

.

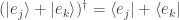

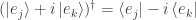

He then goes on to write “But what if we’d done something slightly different, and instead of using  we’d used

we’d used  ? … I won’t explicitly go through the steps – you can do that yourself – but if you do go through them you end up with the equation:

? … I won’t explicitly go through the steps – you can do that yourself – but if you do go through them you end up with the equation:  .”

.”

I was an undergraduate physics and math major, but either I never worked with bra-ket notation and Hermitian conjugates or I’ve forgotten whatever I knew about them. In any case in working through this I could not get the same result as Nielsen; I simply ended up once again proving that  .

.

After some thought and experimentation I concluded that the key is to choose  . Below is my (possibly mistaken!) attempt at a correct proof that all length-preserving matrices are unitary.

. Below is my (possibly mistaken!) attempt at a correct proof that all length-preserving matrices are unitary.

Proof: Let  be a length-preserving matrix such that for any vector

be a length-preserving matrix such that for any vector  we have

we have  . We wish to show that

. We wish to show that  is unitary, i.e.,

is unitary, i.e.,  .

.

We first show that the diagonal elements of  , or

, or  , are equal to 1.

, are equal to 1.

To do this we start with the unit vectors  and

and  with 1 in positions

with 1 in positions  and

and  respectively, and 0 otherwise. The product

respectively, and 0 otherwise. The product  is then the

is then the  th column of

th column of  , and

, and  is the

is the  th entry of

th entry of  or

or  .

.

From the general identity  we also have

we also have  . But since

. But since  is length-preserving we have

is length-preserving we have  since

since  is a unit vector.

is a unit vector.

We thus have  . So all diagonal entries of

. So all diagonal entries of  are 1.

are 1.

We next show that the non-diagonal elements of  , or

, or  with

with  , are equal to zero.

, are equal to zero.

Let  with

with  . Since

. Since  is length-preserving we have

is length-preserving we have

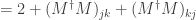

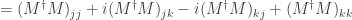

We also have  where

where  . From the definition of the dagger operation and the fact that the nonzero entries of

. From the definition of the dagger operation and the fact that the nonzero entries of  and

and  have no imaginary parts we have

have no imaginary parts we have  .

.

We then have

since we previously showed that all diagonal entries of  are 1.

are 1.

Since  and also

and also  we thus have

we thus have  for

for  .

.

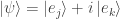

Now let  with

with  . Again we have

. Again we have  since

since  is length-preserving, so that

is length-preserving, so that

Since  has an imaginary part for its (single) nonzero entry, in performing the dagger operation and taking complex conjugates we obtain

has an imaginary part for its (single) nonzero entry, in performing the dagger operation and taking complex conjugates we obtain  . We thus have

. We thus have

We also have

Since  we have

we have  or

or  so that

so that  .

.

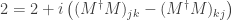

But we showed above that  . Adding the two equations the terms for

. Adding the two equations the terms for  cancel out and we get

cancel out and we get  for

for  . So all nondiagonal entries of

. So all nondiagonal entries of  are equal to zero.

are equal to zero.

Since all diagonal entries of  are equal to 1 and all nondiagonal entries of

are equal to 1 and all nondiagonal entries of  are equal to zero, we have

are equal to zero, we have  and thus the matrix

and thus the matrix  is unitary.

is unitary.

Since we assumed  was a length-preserving matrix we have thus shown that all length-preserving matrices are unitary.

was a length-preserving matrix we have thus shown that all length-preserving matrices are unitary.

is unitary.

is symmetric we have

and since the values of

are all real we have

. We thus have

by the definition of

.

the matrix

is unitary.